Informatica provides the following four error tables:ĭuring ETL execution, records which fail to be inserted in the target table (for example, some records violate a constraint) are placed in the Informatica PowerCenter error tables. The logs hold information about errors encountered, during execution. The results of each ETL execution is logged by Informatica.

OPVA's data model includes aggregate tables and a number of indexes, designed to minimize query time.īy default, bulk load is disabled for all SILs.

This strategy is termed as Slowly Changing Dimension approach 1. SILs have the following attributes:Ĭoncerning changes to dimension values over time, OPVA overwrites old values with new ones. The SIL extracts the normalized data from the staging table and inserts it into the data warehouse star-schema target table. There is one SIL mapping for each target table. Navigate to the Mappings subtab and select 'Bulk/Normal' under Target Load type. Navigate to Session in a workflow and edit the task properties. Perform the following steps to set the load option: Setting Bulk or Normal load option should be done at Workflow session in Informatica. You can also restart load if the load is interrupted. Normal load is faster, if data volume is sufficiently small. It is intended to be used for updates to the data warehouse, once population has been completed. However, if load is interrupted (for example, disk space is exhausted, power failure), load cannot be restarted in the middle you must restart the load. Bulk load is faster, if data volume is sufficiently large. It is intended for use during initial data warehouse population. Incremental submission mode: OPVA-supplied ETL uses timestamps and journal tables in the source transactional system to optimize periodic loads.īulk and normal load: Bulk load uses block transfers to expedite loading of large data volume. There is one SDE mapping for each target table, which extracts data from the source system and loads it to the staging tables. The staged data is transformed using the source-independent loads (SILs) to star-schema tables, where such data are organized for efficient query by the Oracle BI Server. This is the architectural feature that accommodates external database sourcing. The SDE programs map the transactional data to staging tables, in which the data must conform to a standardized format, effectively merging the data from multiple, disparate database sources. Note that you are responsible for resolving any duplicate records that may be created as a consequence. However, you can also define SDE mappings from additional external sources that write to the appropriate staging tables.

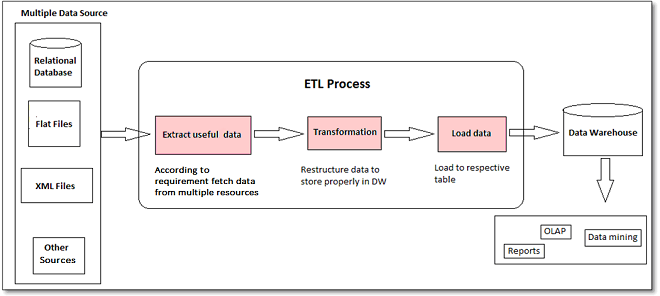

While the OPVA data model supports data extraction from multiple sources, OPVA only includes source-dependent extract (SDE) mappings for the Oracle Argus Safety database. Set up as a recurring job in DAC, the Extraction, Transformation, and Load process (ETL) is designed to periodically capture targeted metrics (dimension and fact data) from multiple Safety databases, transform and organize them for efficient query, and populate the star-schema tables.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed